Agentic Engineering Weekly for March 14-21, 2026

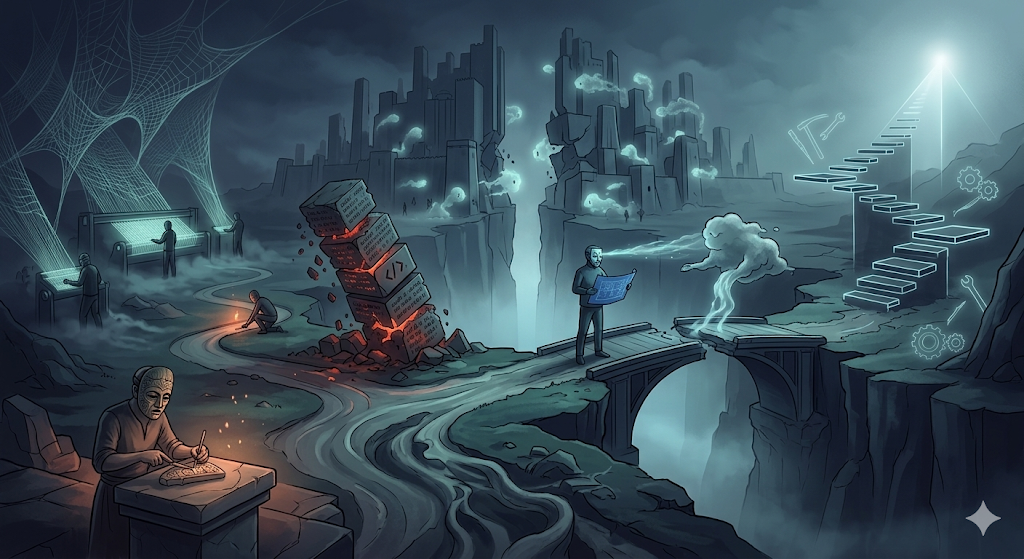

Something shifted this week: the industry started naming things it couldn't articulate six months ago. Comprehension debt, craft alienation, agentic CD. These aren't buzzwords. They're diagnostic labels for problems practitioners have been feeling but couldn't pin down. When a field starts coining precise vocabulary, scattered experimentation is crystallizing into shared understanding.

My top 3 picks this week

- This Has Happened Before. It's Happening Again.: Historical parallels that put the current hype in perspective (article)

- Simon Willison: Engineering practices that make coding agents work: Simon's not-a-book filled with patterns from real usage (article)

- Artificial Organizations: some really good pointers in there on how to leverage AI as a non-technical leader. You'll have to wade through gippityslop a bit, but the core message is worth it. (book)

DORA confirms: AI amplifies your bottlenecks, not your velocity

DORA's latest report delivers data that cuts through the "AI makes everything faster" narrative. Their findings show that AI amplifies existing flow: if your delivery pipeline is healthy, AI tooling accelerates it further. If your pipeline is brittle, AI makes the pain worse. The prerequisite for AI-accelerated delivery isn't a better model. It's better engineering practices.

Wes McKinney draws a sharp parallel to Brooks' The Mythical Man-Month: adding more agents to a late project makes it later. The coordination overhead doesn't vanish just because the workers are silicon. Barry O'Reilly observes that most organizations are bolting AI tools onto operating systems built for a pre-AI world, creating more noise and fragmentation rather than flow.

Doc Norton adds historical perspective, pointing to previous technology shifts that promised to eliminate developer bottlenecks but merely relocated them. The pattern is consistent: new tools expose the real constraints. If your deployment pipeline takes two weeks of manual approvals, an agent that writes code in ten minutes just means the code waits fourteen days and nine hours fifty minutes instead of fourteen days and ten hours.

Worth reading:

- Building Foundations for Continuous (AI-accelerated) Change: DORA data you can take to your leadership, not vibes (article)

- The Mythical Agent-Month: Brooks' Law applied to agents, with the same uncomfortable conclusions (article)

- Artificial Organizations: Executive Leadership Series: Why adding AI to a broken operating model creates faster chaos (video)

- This Has Happened Before. It's Happening Again.: Historical parallels that put the current hype in perspective (article)

Craft alienation finally has a name, and it's spreading

Hong Minhee coined the term craft alienation and it's resonating because it names a split that wasn't about skill level before. LLM coding assistants created a new fault line: not between good and bad developers, but between those who understand what they build and those who've outsourced that understanding. The identity crisis is real, and it's showing up everywhere from engineering blogs to the New York Times.

Ian Cooper makes a useful distinction between a "coder" and a "programmer." The coder role, translating intent into syntax, now belongs to the agent. But the programmer role, the one that designs, reasons about trade-offs, and makes judgment calls, remains fundamentally human. O'Reilly Radar's third AI Codecon is wrestling with exactly this question: what does craftsmanship look like when the craft's most visible artifact is increasingly machine-generated?

Mo Bitar takes the most provocative stance: there's no skill in AI coding. The models do the heavy lifting and you can't meaningfully change outcomes through clever prompting. Philip Kiely offers an instructive counterpoint. He works at an AI company, tried to get AI to write his book about AI, watched it completely fail, then woke up at 5am for six weeks and wrote it himself. Sometimes the craft resists automation not because the tools are bad, but because the work demands something the tools can't provide.

Worth reading:

- Why craft-lovers are losing their craft: The essay that coined the term and nailed the diagnosis (article)

- Coding Is Dead, Long Live Programming: A clear-eyed distinction between the roles AI can and can't absorb (article)

- Coding After Coders: The New York Times on why what programmers now do is "deeply, deeply weird" (article)

- I tried to AI slop my own book: Philip Kiely's honest account of where AI writing hits a wall (video)

- Software Craftsmanship in the Age of AI: O'Reilly Radar tackles the question head-on (article)

Comprehension debt names the cost we've been ignoring

Addy Osmani coined comprehension debt and the concept is sticking because it names something distinct from technical debt. Technical debt is code you know is suboptimal. Comprehension debt is code you can't even evaluate because nobody on the team understands it well enough to judge. An AI can generate a thousand lines of correct, performant code in minutes. The team then owns a thousand lines they didn't write, didn't design, and can't confidently modify.

Osmani and O'Reilly argue that specs become the forcing function in an agent-first world. If consumers can't clearly describe what they want, engineers won't be able to either, and agents certainly won't fill that gap. Spec-driven development isn't bureaucracy: it's the only reliable way to maintain comprehension when the code-generation bottleneck disappears. Gerald Sussman's warning resurfaces here too: in the days of coding agents, understanding what the code does matters more than ever.

Tom Wojcik asks the measurement question nobody's tracking: what's the real cognitive cost of prolonged AI-assisted coding? We count lines generated, PRs merged, velocity points delivered. We don't count the accumulating gap between what the codebase does and what the team actually understands about it. That gap is comprehension debt, and unlike technical debt, you can't even see it accumulating until something breaks and nobody knows why.

Worth reading:

- Comprehension Debt: the hidden cost of AI generated code: The original essay that coined the term, with practical mitigation strategies (article)

- Why You Need Spec-Driven Development in the Age of AI: Osmani and O'Reilly on specs as comprehension insurance (video)

- On The Need For Understanding: Sussman's warning made urgent by AI code generation (article)

- The Rise of Agent-First Source Code: What happens when code is optimized for agents, not humans (video)

The winning pattern is separating decisions from execution

Across co-design frameworks, quality processes, and refactoring workflows, a consistent pattern keeps emerging: the teams shipping well with agents have formalized the split between deciding what to build and executing the build. David Whitney's co-design framework makes this explicit: you own the design decisions, the agent owns the execution. Steve Yegge's approach to quality when "vibing" follows the same logic. Don't inspect the code, that's not your job. Define the quality bar and verify the output meets it.

I'm finding this maps cleanly onto Cynefin: not every AI coding problem is the same type of problem, and choosing which approach fits is the irreducibly human decision. Paint-by-numbers for the obvious, collaborative exploration for the complex. The agent doesn't know whether it's in obvious or complex territory. You do, and that judgment is the thing that can't be delegated.

This pattern also shows up in tooling. AI-assisted refactoring tools like ast-grep separate three phases: detect, preview rewrites, apply. Each phase has built-in test support, keeping the human in the decision loop at every transition. The code review skeptics at Agent Driven Development land in the same place: stop reviewing code line by line, start proving it works through continuous delivery, not ceremony. The common thread is that verification beats inspection when code-generation cost drops to near zero.

Worth reading:

- The Programmer's Guide to Co-Designing with Agents: A practical framework for the decision/execution split (article)

- How Steve Yegge gets quality when vibing: Quality as a verification problem, not a production problem (article)

- Stop Reviewing Code. Start Proving It Works.: Why code review is the wrong ceremony for agent-generated code (article)

Agentic engineering is crystallizing into a discipline

Six months ago, "working with AI agents" was ad-hoc experimentation. This week, it's getting formal specifications. The MinimumCD community published Agentic Continuous Delivery (ACD), extending their continuous delivery framework with constraints and practices designed specifically for agent-generated changes. Bassim Eledath published an 8-level maturity model. Simon Willison published both a Pragmatic Summit talk and a written guide defining agentic engineering as a set of patterns, not a product category.

The formalization matters because it creates a shared vocabulary. When Willison defines specific patterns from real usage and David Vujic warns against falling into waterfall-style development with agents, they're having a conversation that wasn't possible six months ago. The discipline is developing its own anti-patterns, its own best practices, its own quality criteria. That's what fields look like when they transition from "interesting experiment" to "thing we need to get right."

What's notably absent from most of these frameworks is any claim that agents change the fundamentals. The MinimumCD practice guide is a phased migration from quarterly releases to continuous delivery, now extended with agent-specific guardrails. Vujic's core argument is that agile principles apply more than ever when agents are involved. The discipline is crystallizing not around new principles, but around new applications of old ones.

Worth reading:

- Agentic Continuous Delivery (ACD): The first formal CD extension for agent-generated changes (article)

- Simon Willison: Engineering practices that make coding agents work: Concrete patterns from real usage, presented at the Pragmatic Summit (video)

- What is agentic engineering?: Willison's definitive guide, refreshingly free of hype (article)

- The 8 Levels of Agentic Engineering: A maturity model from tab-complete to autonomous agent teams (article)

- Agile & Agentic Engineering: A timely reminder that agile principles didn't expire (article)

Quick Hits

- My Tesla Was Driving Itself Perfectly - Until It Crashed: A resonant metaphor for AI tools that work flawlessly until they don't (article)

- Ghuntley: software development vs software engineering: The sharpest articulation of which parts of the job are accelerating and which remain essential (article)

- Porting software is trivial now: Compress tests into specs, then use specs to drive the AI port (article)

- Domain expertise still wanted: Stack Overflow's data confirms domain knowledge as the real moat (article)

- Will Claude Code ruin our team?: Honest look at how AI coding blurs lines between engineering, PM, and design (article)

- My current favorite readings on coding with AI: Vaccari's curated list after 13 months of intense experimentation (article)

- Karpathy on No Priors: AutoResearch, AI psychosis, and the shifting engineering landscape (article)

- Terence Tao on mathematical discovery: Whether AI will accelerate scientific progress, from the world's top mathematician (podcast)

- The State of AI in 2026: What the Data Actually Shows: Better Stack surveys the current state with actual data, not hype (video)

Curated from 30 sources across articles, podcasts, and videos. Week of March 14-21, 2026.